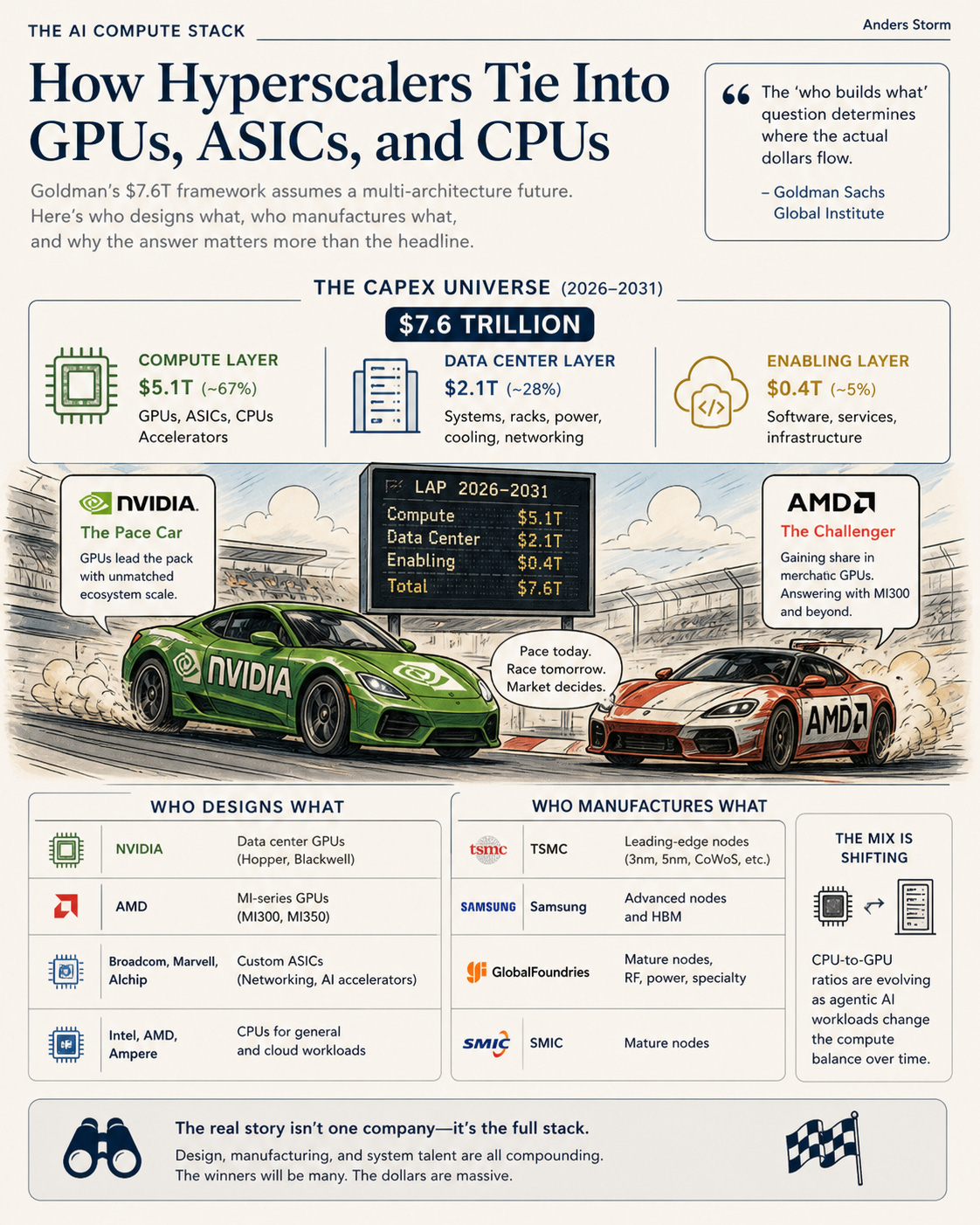

The AI Compute Stack: How Hyperscalers Tie Into GPUs, ASICs, and CPUs

Goldman's $7.6T framework assumes a multi-architecture future. Here's who designs what, who manufactures what, and why the answer matters more than the headline.

The AI capex narrative is dominated by a single name “NVIDIA”. NVIDIA’s GPU dominance is real, but that’s not the full story.

The reality is more interesting and structurally important. Goldman Sachs Global Institute’s recent framework projects $7.6 trillion in AI capex from 2026-2031. Within that universe, the silicon stack is genuinely contested. Custom ASICs designed by Broadcom, Marvell, and Alchip are scaling at 50% CAGR. AMD is growing share at the merchant GPU layer (SemiAnalysis projects AMD share doubling to ~10% by mid-2026). CPU-to-GPU ratios are shifting as agentic AI workloads change the compute mix over time.

The “who builds what” question matters because it determines where the actual dollars flow. The answer is more diverse than the current NVIDIA-centric narrative suggests.

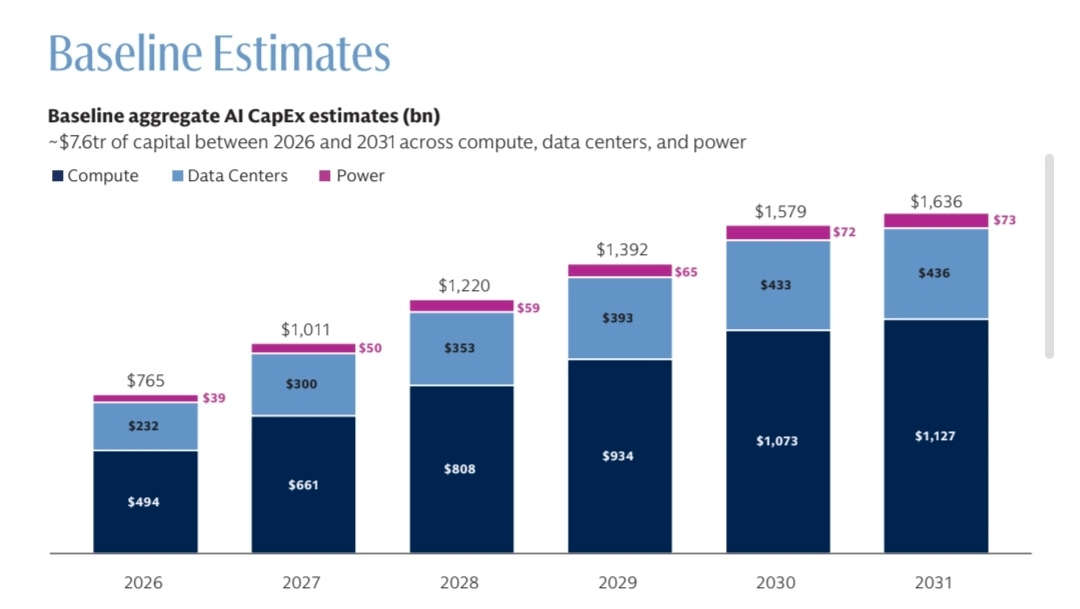

The Capex Universe

Goldman's framework breaks the $7.6T into three layers:

- Compute: $5.1T (~67%)

- Data Centers: $2.1T (~28%)

- Power: $358B (~5%)

Hyperscaler concentration is striking. Combined hyperscaler capex reaches $660-690 billion in 2026 alone, with Google, Microsoft, Amazon, and Meta each planning $60-80 billion individually. Approximately 75% of this is directed at AI-specific infrastructure. The buyers are concentrated. The sellers, less so.

Goldman's analysis identifies four assumptions that materially move the total: silicon useful life, data center cost per megawatt, chip architecture mix, and build-out elongation. The third assumption, chip architecture mix is where the multi-architecture reality lives.

The Merchant GPU Layer

NVIDIA dominates current AI compute. The H100, H200, and Blackwell B100/B200 generations have captured roughly 85-90% of the AI training and inference market. NVIDIA's gross margins run approximately 75% on data center silicon, reflecting both architectural advantages and CUDA software lock-in. But the merchant GPU layer is not single-vendor. AMD has emerged as a credible challenger with the Instinct MI300X, MI325X, and the upcoming MI350/MI400 series. AMD's strategy is straightforward: hyperscalers want a second source, and AMD provides one with reasonable performance at meaningful cost discount.

The MI300X has won deployments at Microsoft, Meta, and Oracle. The MI350 ramps in 2026. The MI400 series, planned for 2027, targets aggressive performance parity with NVIDIA's then-current generation. AMD's challenge isn't hardware, it's ROCm software ecosystem maturity. Until ROCm credibly matches CUDA, AMD remains the second source rather than a true competitor.

Realistic merchant GPU share by 2028: NVIDIA 70-80%, AMD 15-25%, others <5%. The duopoly serves hyperscalers' interest in optionality, but the larger structural shift is happening at a different layer.

Realistic 2028 merchant GPU share projections vary by analyst. SparkCo’s analysis of IDC and Gartner baselines provides three scenarios for AMD: bull case 25-30% share with $18-22 billion revenue (driven by aggressive hyperscaler diversification), base case 15-20% share with $10-14 billion revenue (aligned with current MI300 deployments scaling), and bear case <10% share if NVIDIA’s H200/H300 generation maintains exclusivity.

The base case scenario implies an NVIDIA-AMD merchant duopoly of roughly NVIDIA 70-80%, AMD 15-20%, others <5% by 2028. AMD’s own forecasts from Lisa Su’s Advancing AI presentation are more aggressive ($500B+ TAM, 60% CAGR), though analysts including The Next Platform argue both AMD and Gartner projections may be miscalibrated in different directions.

The duopoly serves hyperscalers' interest in optionality for GPUs. But the larger structural shift is happening at a different layer: custom ASICs designed by Broadcom, Marvell, and Alchip.

Custom ASICs

Custom ASICs are projected to surpass merchant GPU shipments by volume in 2028.

James Carter at Oplexa Insights writes: “NVIDIA built a $4.6 trillion empire selling one product: the world’s most powerful AI chip. But the companies that made Jensen Huang a legend are now building their own silicon, and the Custom ASIC market 2026 is the weapon they are using to do it.

Google does not buy NVIDIA GPUs to run Gemini. Amazon does not pay NVIDIA margins to train its AI models. Meta is not using general-purpose accelerators to serve 3 billion users daily. They are all running custom Application-Specific Integrated Circuits, chips designed from scratch for one purpose, executing at maximum efficiency, at a fraction of the power cost.”